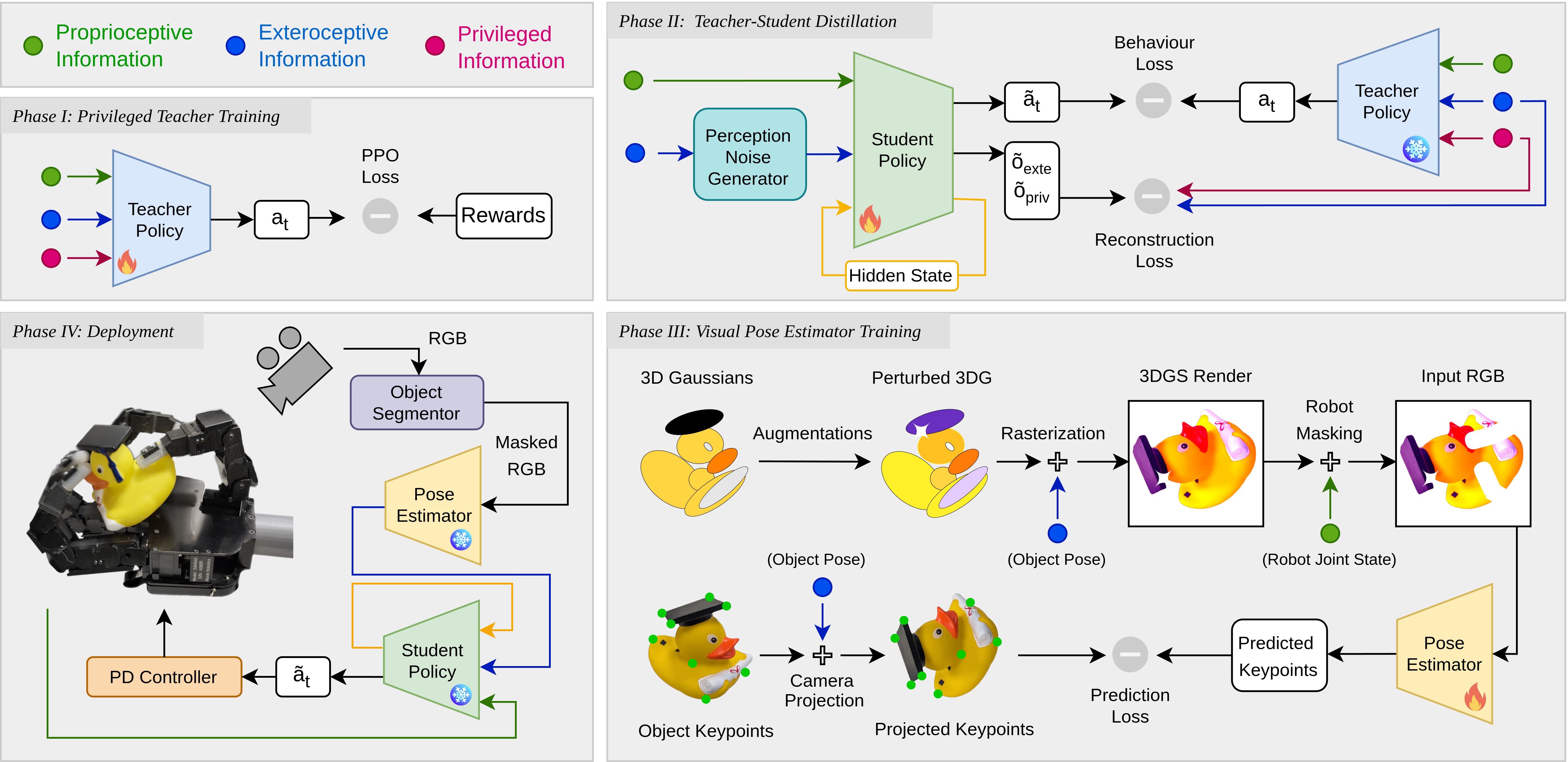

Pipeline Overview

In-hand object reorientation requires precise estimation of the object pose to handle complex task dynamics. While RGB sensing offers rich semantic cues for pose tracking, existing solutions rely on multi-camera setups or costly ray tracing. We present a sim-to-real framework for monocular RGB in-hand reorientation that integrates 3D Gaussian Splatting (3DGS) to bridge the visual sim-to-real gap. Our key insight is performing domain randomization in the Gaussian representation space: by applying physically consistent, pre-rendering augmentations to 3D Gaussians, we generate photorealistic, randomized visual data for object pose estimation. The manipulation policy is trained using curriculum-based reinforcement learning with teacher–student distillation, enabling efficient learning of complex behaviors. Importantly, both perception and control models can be trained independently on consumer-grade hardware, eliminating the need for large compute clusters. Experiments show that the pose estimator trained with 3DGS data outperforms those trained using conventional rendering data in challenging visual environments. We validate the system on a physical multi-fingered hand equipped with an RGB camera, demonstrating robust reorientation of five diverse objects even under challenging lighting conditions. Our results highlight Gaussian splatting as a practical path for RGB-only dexterous manipulation.

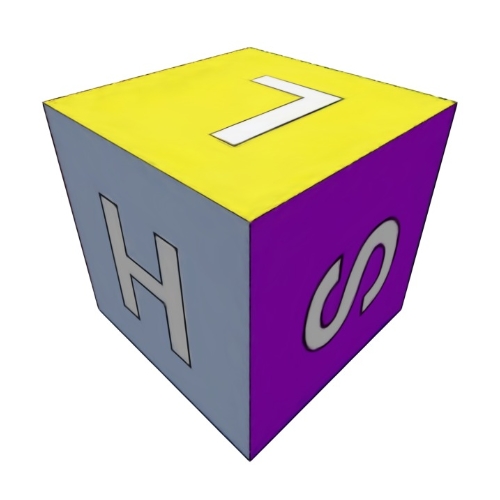

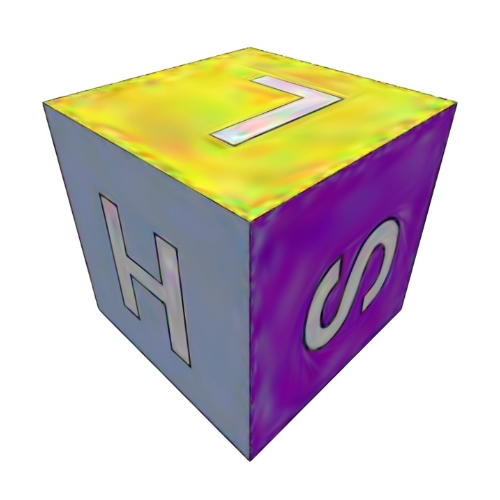

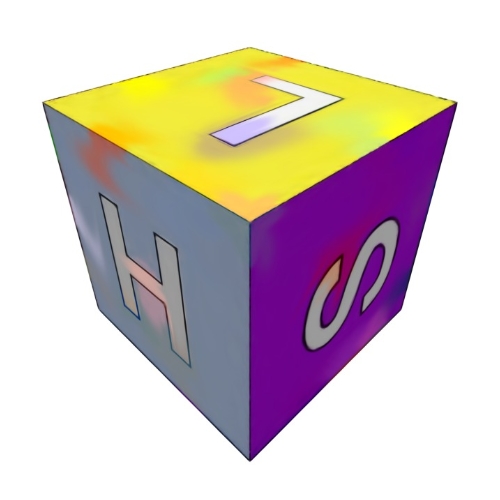

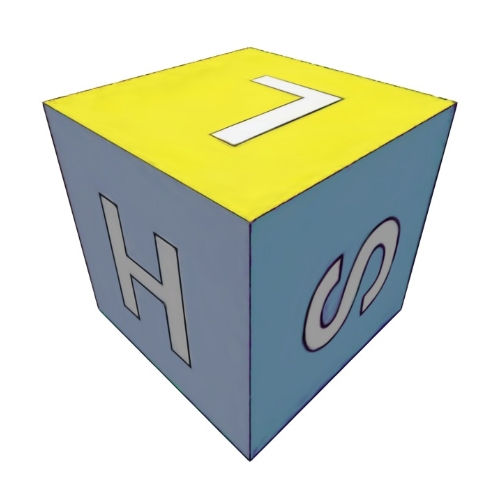

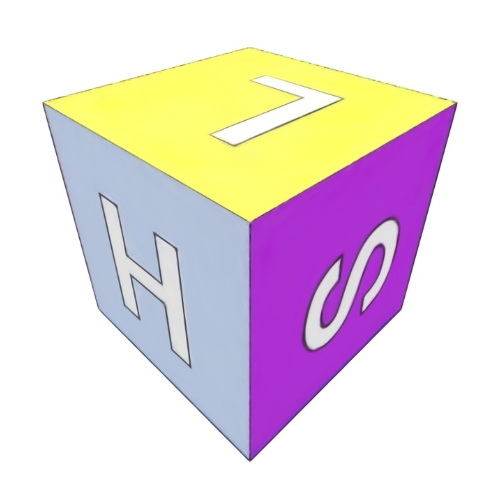

Slide to compare between different augmentation types:

Cube

Rubber Duck

Globe

3D Printed Toy

Our system achieves over 25 consecutive successful reorientations on average under extreme visual conditions that cause traditional estimators to fail.

@article{bhardwaj2026viserdex,

title={ViserDex: Visual Sim-to-Real for Robust Dexterous In-hand Reorientation},

author={Bhardwaj, Arjun and Wilder-Smith, Maximum and Mittal, Mayank and Patil, Vaishakh and Hutter, Marco},

journal={arXiv preprint arXiv:2604.11138},

year={2026}

}